Understanding the Impact of Transformative AI Models

The rapid advancements in artificial intelligence over the past few years have birthed a new era where sophisticated models are now integral in both professional and personal contexts. It's quite revealing to note that almost all leading language models owe their framework to a pioneering paper that introduced the transformer architecture. This brief yet impactful manuscript stirred significant excitement and anxiety in the tech community, highlighting a monumental shift in how we approach machine learning. This transformation didn't just stop with natural language processing; it sent shockwaves through computer vision as well, giving rise to a new generation of vision-language models (VLMs). These models are capable of tackling a variety of tasks—ranging from segmentation and depth estimation to image generation—with an ease that outpaces traditional methods. Surprisingly, they achieve this impressive performance with minimal fine-tuning, leaving many legacy models trailing behind in benchmark tests. That said, it's critical not to assume that the transformer is the sole pathway to superior AI solutions. The industry still grapples with fundamental challenges, such as accurately interpreting language as simple as the word "strawberry." This raises a crucial point: alternatives like neuromorphic computing, photonic neural networks, and other methods are emerging, each offering unique approaches to intelligent system design. Today, I'd like to zero in on a concept rooted in the influential work of David Marr, particularly in his book *Vision*. Marr’s insights compel us to adopt a first-principles methodology when tackling complex problems. One such topic worth dissecting is edge detection in images. Marr's work, much like Darwin's theories on evolution, combined ideas from disparate fields—neurophysiology and computer vision—to advance our understanding of visual processing. Specifically, we’ll delve into various algorithms for detecting edges within images, examining their fascinating parallels to neural activities in biological systems. This examination not only provides insight into the operational mechanics behind these algorithms but also emphasizes the underlying principles that govern how we perceive and process visual information.Understanding the Prewitt Operator

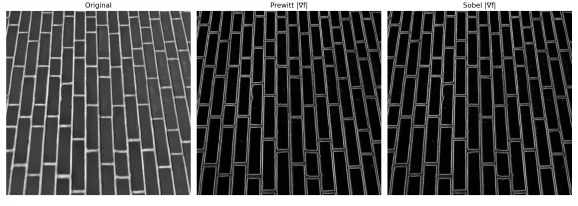

The Prewitt operator stands out by treating all neighboring pixels equally. The convolution kernels for the x and y directions employ simple matrices that assign uniform weight to the surrounding pixel gradients. This method aims to highlight the edges by calculating gradient magnitudes with less emphasis on varying intensities in pixel neighborhoods.

When applied, the resulting gradient magnitude at each pixel is calculated using the formula  and the corresponding direction is determined by

and the corresponding direction is determined by  . Edges manifest where the gradient magnitude is notably high and diminish within more uniform areas.

. Edges manifest where the gradient magnitude is notably high and diminish within more uniform areas.

Limitations of Basic Operators

While the Prewitt operator is a step forward in edge detection, it’s not without its pitfalls. One of the more pressing issues is its susceptibility to noise, which leads to the generation of thick, ambiguous edges. As a result, it flags all pixels that exhibit a steep gradient, often including those that don’t precisely mark an edge but are simply adjacent to it. This inherent drawback highlighted the need for a more refined approach in edge detection, which John Canny ultimately addressed in his pioneering work.

Wrapping Up Edge Detection

What we've explored here isn't just another technical walkthrough; it's an intricate journey that connects biological inspiration to practical application in image processing. Starting from the basic workings of retinal ganglion cells, we traced the evolution of edge detection through the Laplacian of Gaussian (LoG) operator to the sophisticated Canny edge detector—one of the most revered algorithms in the field. Let's break down why that evolution matters:- Edges represent zero-crossings in image intensity, a profound concept introduced by Marr, deeply embedded in neurophysiology

- The LoG operator effectively merges noise reduction with edge detection, utilizing Gaussian filtering to streamline data for the Laplacian’s insightful analysis

- Canny builds on these principles by implementing non-maximum suppression and hysteresis thresholding, ensuring our edges are both fine and interconnected without becoming fragmented

- Line and Circle Detection with Hough Transform directly interacts with edge maps.

- Contour-based Object Detection holds its ground in certain domains despite new learning paradigms.

- Medical Image Segmentation leverages edge-based preprocessing, complementing complex models for accurately identifying thin structures.