Antiracist economist Kim Crayton powerfully asserts that “intention without strategy is chaos,” a sentiment that resonates deeply within the tech industry. While we have acknowledged the biases and blind spots that lead to harmful and unethical technological outcomes for marginalized groups, the pressing question remains: what concrete actions can we take to rectify these issues? Merely wanting to make technology safer is insufficient; we require a clear, actionable strategy to effect real change.

This chapter serves as your roadmap for integrating safety principles into your design workflows. We'll outline methods you can adopt to craft safer technology, persuade stakeholders of the urgency of this initiative, and address the often-raised counterpoint that diversity alone suffices for progress. Spoiler alert: while diversity is essential, it doesn't resolve the deeper systemic problems of unethical technology.

The focus here is to equip you with tactical steps for a process we’ll call the "Process for Inclusive Safety," which you can apply whether you’re embarking on an entirely new product or enhancing an existing one. Adopt this methodology to design safer, more inclusive tools, as it covers five core actions integral to your mission:

1. Conducting research

2. Creating archetypes

3. Brainstorming potential problems

4. Designing actionable solutions

5. Testing thoroughly for safety

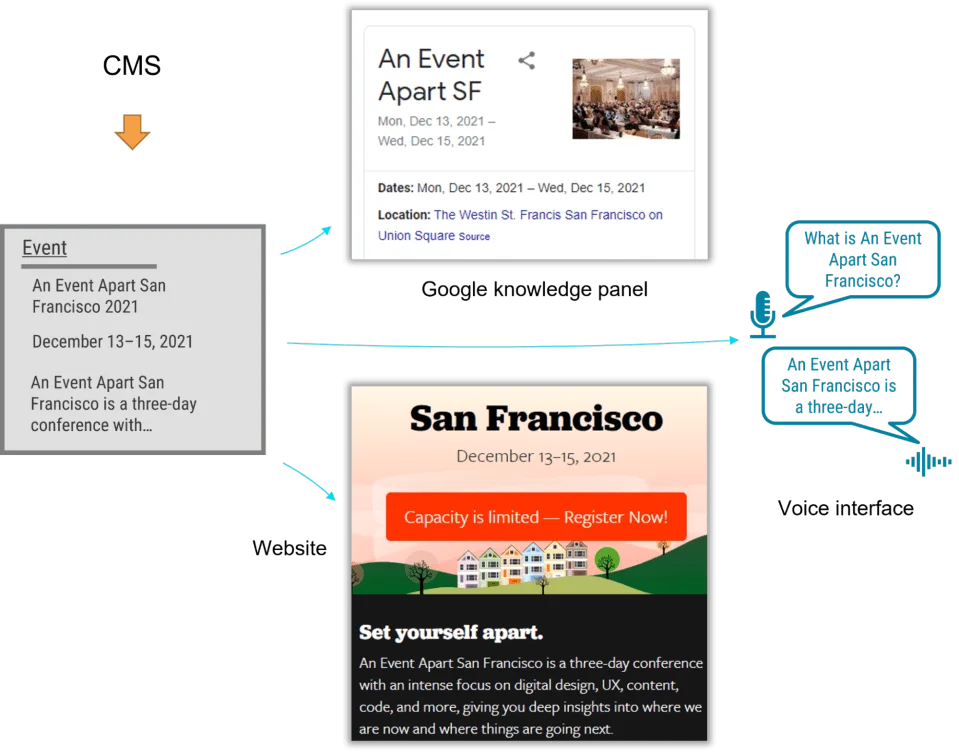

As illustrated in **Fig 5.1**, this process is adaptable; not every step will be relevant in every scenario. Tailor it to fit the unique needs of your project and context. The goal here is to foster a safety-first approach that integrates seamlessly into your existing design practices.

And here’s an invitation: once you implement this framework, I’d love your feedback or insights on how it worked for your team. This methodology is a living document, aiming to evolve and remain practical for tech professionals navigating these challenges daily.

For those focused on products designed specifically for vulnerable populations—be it survivors of domestic violence, sexual assault, or substance abuse—Chapter 7 delves into those unique considerations. The guidance here is tailored for products targeting a general audience, which, statistically, will likely include individuals requiring protection against various forms of harm. Chapter 7 will take a closer look at products aimed specifically at vulnerable groups, ensuring these needs are adequately met.

The process for inclusive safety

When you embark on designing with safety in mind, your objectives should be clear:

- Pinpoint how your product might facilitate abuse,

- Develop strategies to thwart that abuse,

- Empower vulnerable users to reclaim autonomy and control.

The Process for Inclusive Safety is a framework I introduced in 2018, capturing the strategies I've found effective in designing for safety. Each element of this process can be scaled to fit your specific context and integrates into the stages of your design practice.

If crafting technology for vulnerable populations or survivors of trauma, this chapter addresses broader safety principles. Your work will need to balance meeting the general population’s needs while ensuring those at risk receive the specific support and solutions they require to feel safe.

The initial step in this process involves thorough research. This should encompass a dual focus: an extensive examination of how technology can be abused and a deep dive into the experiences of those who have been affected—both survivors and perpetrators. In this first phase, your team will explore interpersonal harm, dive into various safety and security concerns, and address broader issues such as data privacy, algorithmic bias, and online harassment.

Broad research should lay your foundation. Investigate safety concerns surrounding similar products; for instance, if your team is developing a smart device, uncover how existing models have been misused. If artificial intelligence is part of your project, delve into documented biases and discrimination encountered in current AI applications. Resources like [Google Scholar](https://scholar.google.com) can provide invaluable academic studies on these topics.

Next comes the specific research segment, where engaging directly with survivors—when appropriate—can yield profound insights. Collaborate with experts and advocates in relevant fields before broaching sensitive discussions with survivors. It’s critical to recognize the potential trauma involved and to compensate individuals for sharing their experiences, reinforcing ethical engagement in your research efforts.

Similarly, reaching out to understand the perspective of abusers can be beneficial if you can analyze existing research on their behavior instead of attempting to engage them directly. You'll want to identify how technology can be weaponized against others, the methods perpetrators use to conceal their actions, and the rationalizations they provide for their behavior.

Creating archetypes

With your research in hand, it’s time to synthesize your findings into archetypes representing potential abusers and survivors. Unlike conventional personas grounded in interviews, archetypes are generalized representations derived from broader research, capturing underlying safety issues akin to how accessibility designs are crafted apart from specific user interviews.

For example, envision an abuser archetype who seeks to exploit your product as a means of harm. They could intend to surveil strangers or exert control over individuals they are familiar with. In contrast, the survivor archetype embodies someone on the receiving end of abuse, with varied levels of awareness about their situation.

Creating multiple survivor archetypes may be beneficial, as individuals may find themselves in different scenarios of abuse. These archetypes can guide you in designing solutions that not only prevent harm from abusers but also empower survivors to regain control over their circumstances.

To facilitate this process, develop persona-like elements that emphasize the goals of both archetypes. The abuser’s objective would typically involve succeeding in the identified harms, while the survivor’s aim would be to prevent, identify, and ultimately cease the abuse they face. These goals will guide your brainstorming sessions for potential solutions.

While the abuser/survivor framework covers many scenarios, it won't apply universally. Adjust your archetype approach as you uncover different safety issues, ensuring that your models reflect the complexities of the experiences associated with your product.

Brainstorming problems

Having outlined your archetypes, the next step is brainstorming potential problems. Focus on generating new instances of abuse or safety concerns not covered in your previous research. The aim is to be exhaustive in identifying any harmful scenarios related to your product.

How could your technology be misused beyond what's already listed? Engaging in a ‘Black Mirror’ style brainstorming session may be a fun way to stimulate creative thinking on how your product might fail dramatically when faced with unforeseen misuses. Set a specific time for this—perhaps half an hour—and then pivot to identifying more realistic harms.

Once you've compiled a solid list of potential abuses, recognize that you’re unlikely to cover every possible configuration of harm. That anxiety is a natural part of this process. Aim to document your findings, reiterate your commitment to safety, and remain open to user feedback once your product is released.

Designing solutions

Armed with a comprehensive list of possible abuses and your archetypes, the next phase involves developing strategic solutions. Here, you’ll brainstorm ways to counter the abuser's objectives while facilitating and supporting the survivor's goals. This step should fit within the existing phases of your design process.

Pose questions that can guide your team in creating effective safeguards and support mechanisms for your users. For instance, can you design your product to prevent abuse from occurring? If that's not feasible, what barriers can be erected to mitigate potential harm? Explore how the product can alert users to abuse, aid them in understanding how to stop it, or identify user behaviors indicative of harmful situations.

In some instances, particularly sensitive categories such as reproductive health, it may be viable for your application to include features that proactively support users experiencing abuse. However, proceed with caution to ensure that such features do not expose users to further risk, signaling to them when they can seek help.

Testing for safety

The final piece of this puzzle is to subject your prototypes to rigorous testing from both the abuser’s and survivor’s perspectives. This important stage aims to assess how effectively your safety parameters work, identify any deficiencies, and confirm your designs will safeguard users before launching your product.

Safety testing should ideally occur concurrently with usability evaluations. If your organization generally omits usability testing, strategically merging both forms of testing can reveal crucial insights. Remember, your testing needs to account for both archetypes, so plan accordingly to encompass their diverse experiences.

Approach the testing like other usability assessments—invite outsiders who are unfamiliar with the product to interact with it while verbalizing their thoughts. Their unfamiliarity will help highlight practical interactions you may overlook as a designer closely tied to the product’s lifecycle.

Abuser testing

The aim of this testing phase centers on understanding the potential for users to weaponize your product. Unlike traditional usability testing, your goal here is to make it challenging, if not impossible, for individuals to achieve their harmful objectives. Ensure your tests are guided by the abuser archetype's goals identified earlier, providing insights that can lead you to refine your design further.Prioritizing User Safety in Product Design

This segment significantly underscores a critical issue: how the design of tech products can inadvertently facilitate harmful behaviors, particularly stalking. Take the hypothetical fitness app, for instance. If a malicious user sets out to uncover the location of an ex-partner, even a seemingly secure private profile can become a hunting ground. Potentially exploitable features like running routes or follower connections can expose sensitive information. It’s alarming to think about how, with a little tenacity, an abuser could exploit design oversights to achieve their aim.

What’s especially important here is the reminder to developers: if your app allows for such vulnerabilities, it’s time to reconsider your design principles. Discovering that users can be stalked despite safety measures means backtracking to refine those safeguards. Designing with the intent to protect, rather than just attract, is an uphill battle that requires persistent iteration.

Empowering Survivors Through Thoughtful Design

The concept of "survivor testing" adds a layer of empathy that is often missing in product development. It emphasizes the need to empower users who have been targeted, not just to prevent abuse but also to enhance their sense of safety and control. For example, if a survivor interacts with a smart thermostat that gets manipulated by an abuser, the testing must ensure that this user can easily access usage logs and regain control. Simple, intuitive instructions for removing an unauthorized user and resetting passwords can make all the difference.

Moreover, survivor testing isn't always about separate focus groups; sometimes, it aligns closely with the goals of user safety. When thwarting an abuser prevents stalking, the effort serves dual purposes, protecting both individual users and the integrity of the platform. This highlights a broader truth: safeguarding users should be a default priority, not an afterthought.

Integrating Compassionate Design with Stress Testing

The idea of stress testing as proposed by Eric Meyer and Sara Wachter-Boettcher deserves attention. It challenges the conventional mindset that design should cater to ideal users having a good experience. By instead considering users facing high-stress or traumatic situations, we can expose weaknesses in our designs. Are there features that become confusing when someone is preoccupied with their personal struggle? Are critical updates buried in a web of information?

This not only elevates the user experience but also fosters a sense of community and understanding. Taking the time to ask how a product can create peace during stressful times can potentially transform its reception. As we move forward, the challenge is to make compassion a fundamental design principle, leading to products that respect and prioritize user dignity, especially in vulnerable situations.

The takeaway? It’s clear that incorporating these principles into product design isn't just good ethics; it's smart business. In an era increasingly focused on user welfare, developers who prioritize safety and compassion will not only meet users' needs but also build trust that can translate into long-term loyalty. If you're in product development, the imperative is clear: design with intent to shield users and empower survivors. Your users deserve nothing less.