How we replaced Ingress-NGINX at Stack Overflow

With the retirement of Ingress-NGINX, we faced the challenge of finding an alternative traffic routing solution for our Kubernetes infrastructure.

The announcement back in November 2025 regarding the impending retirement of Ingress-NGINX took us by surprise. This tool had been pivotal for routing traffic in our Kubernetes environment ever since we adopted it. We considered transitioning to the Gateway API as it promised enhanced features, but with Ingress-NGINX performing adequately, we were hesitant to shift focus. However, the retirement ultimatum forced our hand, compelling us to integrate a new traffic routing solution into our immediate development agenda.

Crafting a transition strategy

Faced with an overwhelming set of options, we were tasked with refining our choices before diving into implementation and testing. We aimed to leverage the Gateway API for its new capabilities and finer role separation while remaining flexible enough to consider another Ingress controller if needed. Time was of the essence, so we balanced thoroughness with urgency, ultimately establishing criteria that whittled our focus down to three Gateway implementations and two Ingress controllers.

Gateway API Candidates

- NGINX Gateway fabric

- Traefik

- Istio

Ingress Options

- F5 NGINX ingress

- Traefik

The implementation had to be a fully-compliant solution outlined in the list of approved implementations, ensuring a reliable foundation for our evaluations. Operating across GCP and Azure allowed us to further exclude cloud-specific solutions. Examination of the v1.4 feature matrix and third-party benchmarks also informed our tightened focus on the implementations above.

Regrettably, HAProxy didn't make the cut for our experiments since it was deemed outdated, despite its reliable history within our data center before we moved to GKE. At the time of drafting this, it has since transitioned to a fully conformant status.

In terms of fallback Ingress solutions, Traefik briefly appealed due to its compatibility with NGINX annotations. Ultimately, we discovered that many of our existing annotations weren't supported, diminishing its viability. F5 NGINX ingress initially appeared to be a dependable option but required custom resources for advanced routing—an incompatibility that complicated integration with other controller types.

Therefore, we ruled out switching to another Ingress solution early on.

Analyzing usage patterns

To sculpt our testing scenarios, we exported all ingress objects from our production clusters into YAML files, then utilized Claude to categorize them based on different use case types. The majority of our routing was fairly straightforward, which allowed us to narrow our testing to approximately six distinct scenarios along with two scalability benchmarks.

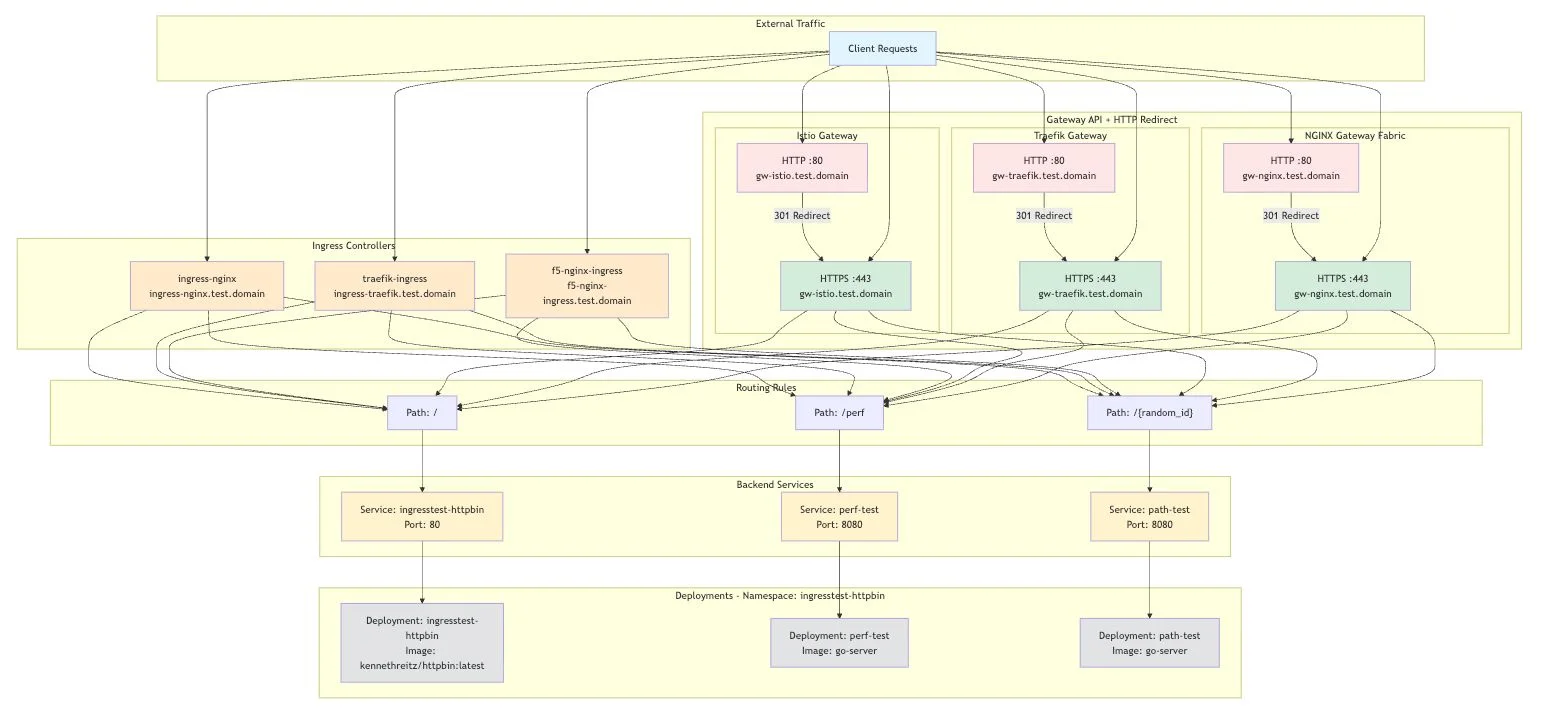

Constructing a testing environment

Our testing infrastructure primarily utilized HTTPBin as a backend. It serves as an exceptional resource for anything HTTP-related, enabling in-depth analysis of request and response headers. For instance, we addressed a case where it was crucial to dynamically adjust host headers for specific traffic using HTTPBin’s /headers endpoint, which returns the request headers as a JSON response. This capability allowed us to establish test cases that validated header modifications effectively.

A second backend component was a simple Go web server deployed at perf., designed for rapid response to high request volumes. We configured it to assess gateway performance under heavy load conditions, simulating increased latency to examine how well each implementation handled surges in connections and requests. Although HTTPBin had a similar endpoint, skepticism about its performance under stress led us to use the Go server instead for our intensive tests. The architecture of our test environment was accordingly structured.

Key findings from testing

Setting up the three Gateway API implementations was fairly straightforward, though I did encounter a minor frustration with Traefik’s requirement for an “entrypoint,” which sets up a TCP listener. Neglecting this aspect generates errors during gateway creation, somewhat undermining the abstraction principle.

All tested implementations competently managed our use cases. Among the features of the Gateway API, Istio outperformed, while Traefik fell short. We quickly realized that some Gateway API functionalities, impressive in theory, lacked the necessary depth for our practical applications. For example, the header modification feature in HTTPRoute only supports static values, which compelled us to resort to custom extension points for dynamic requirements. While all implementations proved sufficiently flexible, the complexity of Istio's syntactic requirements for filters was noticeably higher compared to Traefik and NGINX.

In some scenarios, unique behavior across implementations necessitated adaptations in our applications. Currently, we employ ngx_http_auth_request_module for forwarding to an authentication service, but Istio's external authorization showcased distinct behavior that could complicate integration. These integration challenges were significant hurdles that slowed the migration process.

Performance benchmarks

I’ve always found performance analysis to be an area where it’s easy to get lost in details. With limited time and resources here, we aimed for simplicity and practicality. In larger scales, you might find significant disparities emerge among these implementations, but our immediate goal was to assess their capability to meet current scalability demands.

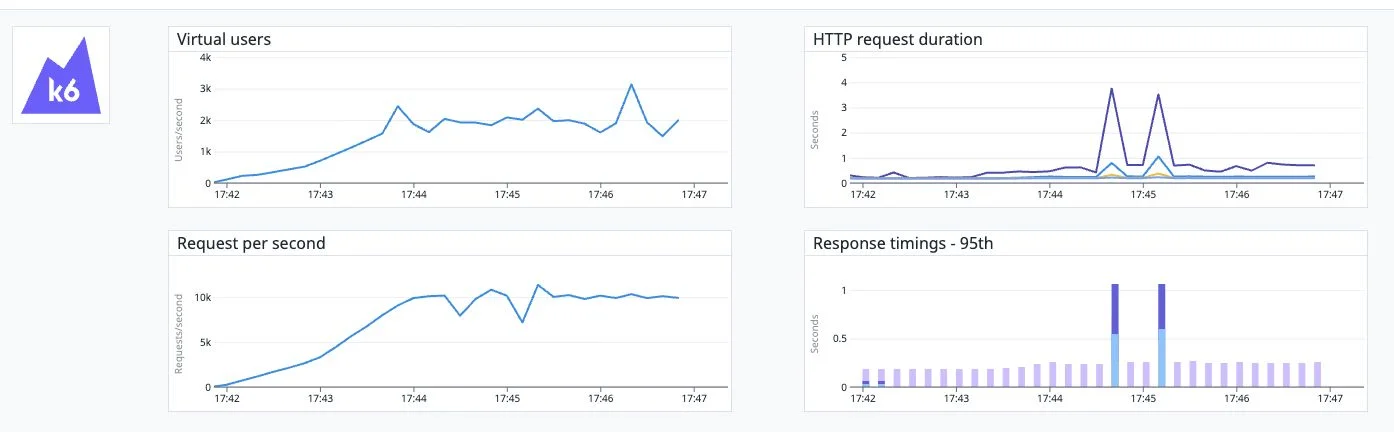

Our scalability testing focused primarily on two factors: ensuring each gateway could manage multiples of our daily traffic and evaluating performance under customer-specific ingress configurations. We set an ambitious target of 10,000 requests per second (RPS) to gauge overhead capacity beyond typical operational levels.

Our testing environment featured four gateway replicas, each on dedicated compute nodes labeled as e2-standard-4 in GCP. The test client ran K6 on an Azure instance optimized for high connection volumes, specifically Standard_DC8as_cc_v5.

Initial RPS benchmarks

The latency simulations included 0, 150, and 350 ms scenarios, with all implementations performing admirably under these conditions. The results across the board were fairly consistent. Here’s how they stacked up during the 150ms latency test:

Traefik Performance

http_req_duration..............: avg=188.27ms min=176ms

med=180.22ms max=820.56ms p(90)=195.11ms p(95)=219.31ms

NGINX Performance

http_req_duration..............: avg=205.34ms min=176.15ms

med=183.13ms max=1.83s p(90)=244.14ms p(95)=297.59ms

Istio Performance

http_req_duration..............: avg=186.73ms min=176.03ms

med=180.52ms max=3.48s p(90)=194.34ms p(95)=216.32ms

Route Creation Benchmark

Initially aimed at creating 5000 HTTPRoutes successfully with each implementation, we later discovered practical limitations well below that number. I referenced insights from third-party benchmarks that highlighted issues with Traefik and NGINX regarding route handling. We replicated a test whereby we generated 5000 HTTPRoutes, each linked to one path rule, and conducted concurrent requests to examine route accuracy.

While all implementations managed the initial route creation, we observed significant delays with Traefik, which eventually timed out, highlighting some inefficiencies during the process.

=== RUN TestRoutedPaths

=== RUN TestRoutedPaths/gw-nginx

gateway_test.go:443: 5000/5000 paths pending, retrying in 5s

gateway_test.go:443: 105/5000 paths pending, retrying in 5s

gateway_test.go:443: 105/5000 paths pending, retrying in 5s

gateway_test.go:443: 87/5000 paths pending, retrying in 5s

gateway_test.go:335: applied 5000 HTTPRoutes

gateway_test.go:443: 3/5000 paths pending, retrying in 5s

gateway_test.go:431: all 5000 routes converged in 42.047s

=== RUN TestRoutedPaths/gw-istio

gateway_test.go:443: 5000/5000 paths pending, retrying in 5s

gateway_test.go:443: 1167/5000 paths pending, retrying in 5s

gateway_test.go:443: 93/5000 paths pending, retrying in 5s

gateway_test.go:443: 93/5000 paths pending, retrying in 5s

gateway_test.go:443: 32/5000 paths pending, retrying in 5s

gateway_test.go:443: 32/5000 paths pending, retrying in 5s

gateway_test.go:335: applied 5000 HTTPRoutes

gateway_test.go:431: all 5000 routes converged in 41.981s

=== RUN TestRoutedPaths/gw-traefik

gateway_test.go:443: 5000/5000 paths pending, retrying in 5s

gateway_test.go:443: 4939/5000 paths pending, retrying in 5s

gateway_test.go:443: 4921/5000 paths pending, retrying in 5s

gateway_test.go:335: applied 5000 HTTPRoutes

gateway_test.go:443: 4871/5000 paths pending, retrying in 5s

gateway_test.go:443: 4856/5000 paths pending, retrying in 5s

gateway_test.go:443: 4833/5000 paths pending, retrying in 5s

gateway_test.go:443: 4827/5000 paths pending, retrying in 5s

gateway_test.go:443: 4823/5000 paths pending, retrying in 5s

gateway_test.go:443: 4820/5000 paths pending, retrying in 5s

gateway_test.go:443: 4816/5000 paths pending, retrying in 5s

gateway_test.go:443: 4811/5000 paths pending, retrying in 5s

...

--- FAIL: TestRoutedPaths (441.53s)

--- PASS: TestRoutedPaths/gw-nginx (56.68s)

--- PASS: TestRoutedPaths/gw-istio (79.96s)

--- FAIL: TestRoutedPaths/gw-traefik (304.88s)

--- FAIL: TestRoutedPaths (410.94s)

--- PASS: TestRoutedPaths/gw-nginx (60.82s)

--- PASS: TestRoutedPaths/gw-istio (46.98s)

--- FAIL: TestRoutedPaths/gw-traefik (303.13s)Traffic management during updates

Once we loaded 5000 routes, we realized we had set an overly ambitious target. When we re-ran the K6 benchmark aiming for 10k RPS through the gateway, it resulted in failures. High latency and numbers of concurrent requests were problematic. We settled on a more reasonable target of 1,000 routes for functional performance validation and re-ran the tests with successful outcomes.

Testing updates to routes while traffic was flowing proved revealing. Istio and Traefik managed the task without significant drawbacks, whereas NGINX experienced latency issues whenever an HTTPRoute was modified, especially with a configuration of 1,000 routes. As illustrated in our analysis, this led to notable response time increases during updates.

NGINX latency during 10k RPS updates with 150ms simulated latency

Final Decision

After thoroughly evaluating all three implementations and their performance characteristics, we opted for Istio as our solution. Its reliability and consistent performance throughout our tests stood out as key advantages. While all options had their merits, Istio’s advanced feature set intrigued us, suggesting potential for future-proofing our service.

The migration will commence in the upcoming weeks. I’ll be documenting any notable findings and challenges along the way in a follow-up article.